AI is currently dominant in the technology stack, with widespread use across every sector. However, beyond AI’s capabilities lie the limits of classical computers. Can you believe that AI (artificial intelligence), which has had a revolutionary impact on industry and science, also has limitations beyond which it cannot function? Yes, just like classical computers, AI can only function to a certain extent due to its set amount of computational power. With the advancements in quantum computing, there is a possibility of significantly enhancing the performance of machine learning and AI. In the times to come, the scope of quantum computing will be investigated in terms of its effect on AI and its implications for various sectors such as business, industry, and the economy. Emerging developments in combining quantum and AI are opening up new possibilities, with the potential to redefine industries and drive future breakthroughs.

And if the following facts from the business insider are to be believed, then it is certain it is the future of computing.

- The processing speed of quantum computers surpasses classic computers by millions of times.

- Predictions indicate that the quantum computing market will attain a value of $64.98 billion by the year 2030.

- The development of quantum computing tools is a competitive endeavor, with industry giants such as Microsoft, Google, and Intel vying for the lead.

Quantum Computing: What Is It?

Computing based on quantum mechanics is known as quantum computing. Traditionally, data is encoded as bits that can either be 1 or 0. In quantum computing, qubits can be both 1 and 0 at the same time due to the property of superposition. Quantum physics is the fundamental science behind quantum computing, enabling these unique computational capabilities.

Several factors contribute to quantum computing’s power, and many calculations can be done simultaneously. Computer science plays a crucial role in developing quantum hardware, algorithms, and error correction techniques that make these advancements possible. This is also why it is considered the future of artificial intelligence and data science.

Quantum computers use advanced mathematical techniques to reveal patterns and structure in information that classical computers cannot access.

What is the Difference between Quantum Computing and Classical Computing?

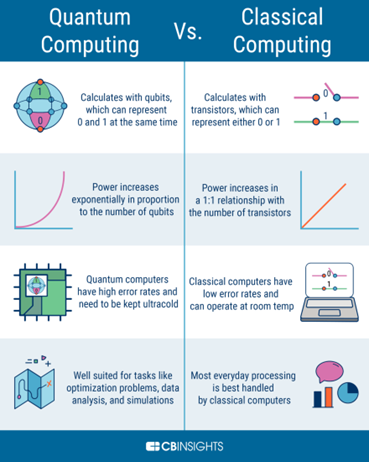

The main difference between classical and quantum computing is that while conventional computers only use 0s and 1s, quantum computers employ qubits. This means that they can perform many calculations at once since qubits can represent both 0s and 1s simultaneously. Furthermore, qubits make quantum computers more reliable for complex applications like AI because they are not prone to the same errors as classical computers. This makes them more suitable for use in artificial intelligence applications. Unlike quantum algorithms, classical algorithms process data sequentially and face limitations in efficiently modeling complex systems or discovering intricate patterns, which quantum computing can potentially overcome.

Quantum computing is intended to support and enhance the capabilities of classical computing. Quantum computers are expected to complement, rather than replace, classical computers by supporting their specialized functions, such as system boosts. Classical hardware plays a crucial role in supporting quantum operations, often handling pre-processing, optimization, and error decoding tasks that current quantum technologies cannot efficiently perform alone. Hybrid quantum-classical models, which combine classical AI techniques with quantum computing, are being developed to enhance data analysis, pattern recognition, and model fine-tuning, leveraging the strengths of both approaches. They are designed to perform tasks much more accurately and efficiently than classical computers, giving developers a new tool for specific applications. In the future, quantum computing holds the potential to replace classical systems for certain complex tasks, aiming for practical quantum advantage as the technology matures.

Image Source: Quantum Computing Vs Classical Computing

The Positive Impact of Quantum Computing on Artificial Intelligence

- Data can be processed faster by quantum computers than by conventional computers. In other words, AI systems will be able to learn and improve faster. If quantum entanglement is utilized, algorithms may also be able to exploit correlations between variables more easily.

- Quantum computers can handle complex optimization problems that traditional computers cannot handle, making AI algorithms run better. This could lead to artificial intelligence that is more powerful and intelligent than anything we have ever seen, since quantum computing does not follow classical physics laws.

- Many AI applications, such as planning and scheduling, can benefit from quantum computing because it helps explore viable solutions to problems.

- AI architectures can be developed more efficiently and at a larger scale using quantum computers.

- There are certain calculations that quantum computers can perform that traditional computers cannot solve, leading to the development of new AI algorithms. For example, Shor’s algorithm can be used to factor large numbers, and quantum computers can simulate quantum systems more efficiently than classical computers.

- By using quantum annealing, problems that cannot be solved classically can be solved using quantum computers. The use of quantum computers can verify the results of AI algorithms to ensure that they are correct and error-free.

- In quantum computers, AI systems can learn faster and be better prepared for real-world situations by creating powerful simulation environments. Quantum computers, for example, do not forget things catastrophically like classical neural networks do. Because of this, they are better at lifelong learning since they can learn new things without forgetting how to do old things.

- AI systems can use quantum computers to protect sensitive data. Moreover, parallel processing can be used to counter cybercrime. Unlike classical computers, which exist in only one state, quantum computers can be in multiple states at once, allowing them to find better algorithms.

Quantum Computing and Artificial Intelligence Applications

Resolve Complex Problems in a Short Period

Data sets are becoming increasingly complex and larger than what our current computers can handle, putting significant pressure on our computing architecture. Today’s computers are incapable of solving complex problems that can be easily tackled by quantum computing, which is expected to resolve these challenges in mere seconds. Quantum computers excel at solving highly complex problems, such as molecular simulations and chemical modeling, that classical computers struggle with. Additionally, quantum computing’s ability to model and simulate the behavior of physical systems offers significant advantages in fields like chemistry, material science, and quantum chemistry. The use of a quantum model is crucial for understanding, analyzing, and predicting quantum system behaviors, which underpins advancements in quantum machine learning and other AI applications.

With Quantum Supremacy (the ability of a quantum computer), which Google claimed to have achieved in 2019 (a claim disputed by IBM), computations that typically take thousands of years can now be accomplished in just 200 seconds.

Managing Large Datasets

Every day, we generate approximately 2.5 exabytes of data. Ordinary CPUs and GPUs are unable to handle such a large amount of data, whereas quantum computers are designed to quickly identify patterns and anomalies based on this massive amount of data. High-quality training data is essential for AI and quantum models to achieve accurate results and improve scalability. Machine learning techniques are also employed to optimize quantum computing performance and efficiently analyze these large datasets.

Detecting and Combating Fraud

As quantum computing and artificial intelligence are applied to the banking and financial industries, fraud detection will be improved and enhanced. In addition to the ability to recognize patterns difficult to detect with traditional equipment, models trained on quantum computers would also be able to handle the large amount of data that these machines could handle. Advancements in algorithms would assist in achieving this goal as well. AI can propose and extrapolate new quantum experiments specifically tailored for financial applications, leading to innovative approaches in risk assessment and fraud detection. Additionally, optimizing quantum circuits is crucial for implementing efficient financial algorithms and enhancing the performance of fraud detection models on quantum hardware.

Developing Better Quantum Machine Learning Models

In this era of growing data volumes, companies are no longer limited by traditional computer technologies to analyze complex scenarios. These businesses require sophisticated models that can analyze all types of scenarios.

It is estimated that by 2025, the healthcare industry’s data generation will grow at a compound annual rate of 36%, which is 6% faster than manufacturing, financial services, logistics, and e-commerce. By using quantum technology to develop better models, we may be able to treat illnesses more effectively, reduce the risk of financial collapse, and improve coordination.

Quantum computers have demonstrated success in accelerating DNA sequencing in the medical field and accurately predicting traffic volumes in transportation. Quantum computing is expected to play a crucial role in advancing our understanding of biology and evolution, as the unique behaviors of quantum particles enable advanced modeling of complex biological systems. The integration and calibration of quantum devices are essential for achieving breakthroughs in healthcare and climate modeling, as these devices provide the hardware performance needed for such demanding applications. Quantum processing units serve as the core hardware for running advanced quantum models, supporting the execution of sophisticated algorithms that drive innovation in these fields.

Recent Breakthroughs in Quantum Computing

The successful implementation of quantum technology relies on photonic integrated circuits that can effectively control photonic quantum states, or qubits. Superconducting qubits are another core hardware component in quantum computing, playing a crucial role in quantum control, quantum gate implementation, and quantum error correction. To address this issue, physicists from the Helmholtz-Zentrum Dresden-Rossendorf (HZDR), TU Dresden, and Leibniz-Institut für Kristallzüchtung (IKZ) have achieved a significant breakthrough. They have demonstrated the controlled creation of single-photon emitters in silicon at the nanoscale.

The scientists in their report on Nature Communications, on 12th December 2022, claimed that –

“Previous efforts to create single-photon emitters were hindered by uncontrollable creation in random locations, which limited scalability. The controllable production of individual G and W centers on silicon wafers through focused ion beams (FIB) has been achieved with a high probability. Additionally, a scalable implantation protocol using broad beams that aligns with complementary-metal-oxide-semiconductor (CMOS) technology has been developed to create single telecom emitters on the nanoscale with pinpoint accuracy. These results provide a straightforward path for the creation of photonic quantum processors at an industrial scale, with technology nodes below 100 nm. This research presents a clear and practical route to the development of such processors.”

Currently, most quantum computers are classified as noisy intermediate scale quantum (NISQ) devices, which face challenges due to hardware noise and limited error correction, making it difficult to achieve scalable, fault-tolerant quantum computing. The transition from NISQ devices to error-corrected quantum computers using logical qubits is essential for enabling significant breakthroughs in quantum computing in AI and quantum algorithms.

Wrapping Up

The potential applications of quantum computing in various fields are rapidly gaining traction. However, there has been little discussion about how this technology will impact artificial intelligence in the future. Quantum computers can solve decoding problems much faster than classical computers, and they can also model large-scale systems and molecules. As quantum computing becomes more accessible, it will play a crucial role in the development of artificial intelligence and future applications. For example, the quantum approximate optimization algorithm (QAOA) is being used for combinatorial problem-solving in AI, with advanced models generating optimized QAOA circuits for quantum optimization tasks. Variational quantum algorithms, such as the variational quantum eigensolver (VQE), are also key for optimizing AI models and addressing challenges like barren plateaus, with transfer learning techniques enhancing their scalability and performance. Additionally, transformer models are being developed for quantum error correction, embedding syndrome information and predicting errors to improve AI performance and noise resilience in quantum computing systems. Moreover, they can handle vast amounts of data, which is essential for training artificial intelligence models.

The adoption of artificial intelligence is increasing in a wide variety of industries, including retail, manufacturing, medical devices, transportation and logistics, smart cities, utilities, and consumer electronics.