Imagine:

- Your camera with the capabilities to differentiate family members from burglars and undertake necessary actions: open the door for family members, produce a loud alarm when a burglar enters, and inform you about the incident.

- Machine vision based cameras detecting anomalies in the factory and taking necessary actions without any human intervention.

- Drones, having the understanding of their flight path, navigating seamlessly, by leveraging the AI capabilities.

AI and machine learning have revolutionized the way we interact with products and devices. And, it has significantly increased our expectations from them. Extending these capabilities to the edge devices can have enormous benefits and applications. These stats that depict the growth and demand of AI at the edge:

By 2022, 80% of smartphones will have on-device AI capabilities, which is a significant growth compared to 10% in 2017.

Usage of devices with edge AI capabilities is set to grow by 15 times until the end of 2023, to an estimated 1.2 billion units, and the share of AI tasks to be performed on edge than cloud will grow over 7 times, from 6% in 2017 to an estimated 43% in 2022

AI surrounds us and its presence is ubiquitous. Facial recognition/fingerprint verification capabilities present in our mobile devices allow us to keep our phones safe and make sure they are accessible only to the designated people. Interactive assistants in the form of Alexa, Google Assistant, SIRI, and Cortana are a part of our daily life. Google Maps knows where we are and helps us reach our home or work with effective directions and notifications.

For years, cloud service providers like Microsoft Azure, Amazon Web Services (AWS), and Google Cloud Platform have handled the task of connectivity, storage, database handling, big data analytics, and AI capabilities. Cloud computing services have been efficient, massively centralized, and are a full-fledged working system developed to automate the IT tasks for a number of industries.

Challenges associated with cloud computing

All the above-discussed cloud computing services come with a set of challenges: latency, privacy, security, and reliability have been some of the pain points.

Latency

Internet traffic is always subject to latency, which is the result of congested networks. At times, the delays can be last for a long period, which can negatively impact applications that require real-time inference. This, in turn, will affect decision-making capabilities.

A video surveillance camera produces data about 5GB data every hour. Millions of such devices produce an enormous amount of data and this data needs to be transferred to the cloud for its utilization. Compression methods are employed to compress the data received from these devices and this often results in missing out important information or insights that are part of the lost data. This limits the potential impact of AI training in the cloud or the data center.

Example:

- Autonomous devices need to take decisions within a fraction of a second to avoid any damage to life and property.

- Authentication devices at the site cannot afford delays of seconds.

On the other hand, edge devices have access to the latest data, which extensively reduces the latency to a negligible level and can also initiate tasks on the go. This helps in making better-informed decisions and reduces the leakage and complexity of the IOT eco-system.

Performance

AI can process the data much faster on the end device as compared to the cloud as the data does not need to travel back and forth. Additionally, it has access to unfiltered data to deliver better results in the shortest time.

Privacy

Clouds service providers have their data centers established in a number of areas across the world. In this scenario, the sharing of personal and sensitive data by organizations/individuals across boundaries have raised concerns regarding the privacy of the data.

Recent incidents of data breaches and the demand to have strict laws regarding data transfer have further stimulated the need to process data locally.

With AI on the edge, in many cases, only the data that requires further evaluation is shared with the third-party (cloud service providers) which decreases the amount of data transferred and therefor reduces probability of breach in privacy.

Bandwidth

As mentioned earlier, in order to generate insights, data needs to be pumped to the cloud. Connection speed varies in different parts of the world and often there are scenarios where there is difficulty in transferring data from/to the server from remote locations.

On the other hand, AI at the edge solves the problem and only a part of the relevant data is required to be transferred to the cloud for further analysis. This reduces the load on the cloud as it requires lesser bandwidth and the need to store data, ultimately reducing the storage cost.

RELATED BLOG

It also reduces the power requirements to maintain the facility as less transmission of data means less load on the serve and less heating. All this ultimately reduces the need of excess power to maintain the entire facility for the cloud service providers.

How to overcome these challenges

Now that we have discussed the possible challenges we could face, let’s take a look at how we can address them.

Distributed computing

Most of the Microcontrollers (MCU) in the IoT ecosystem have one task at hand, transferring data from one end to the other. For the majority of the time, they remain idle.

A single device will not be able to perform AI computations but with the help of advanced algorithms, their combined power can be utilized to perform AI-intensive computations.

AI co-processors

GPU has transformed the gaming industry with its capability to perform parallel computations; similarly, usage of AI co-processors can help in performing advance computations at the edge.

Example: Amazon DeepLens – a deep learning camera makes real-time inferences with broad framework support.

Advanced algorithms

Much research is in progress to mimic the activity of our brain and use it to develop algorithms that require less volume of data to understand and make better decisions. Example: One of such techniques is transfer learning, which utilizes the base of one application to make decisions for other related applications.

Global companies on their edge AI journey

Advanced hardware is required to process vast streams of the data, distribute the workloads, and stick within the power, thermal, and size constraints. Major cloud service providers have collaborated with semiconductor companies to deliver power-efficient AI chips.

Amazon joined hands with Intel to develop Amazon DeepLens, a wireless camera which has AI inferencing capabilities. It is integrated with Amazon SageMaker, where developers can develop the model, train and validate it as per their requirements with support for different deep learning frameworks like Caffe and TensorFlow. Apart from that, it can utilize other Amazon Cloud services like AWS Lambda and AWS IOT Greengrass.

Google developed its own hardware chip Edge TPU with software stack Cloud IoT Edge, where users can develop, train, and validate their models in the cloud.

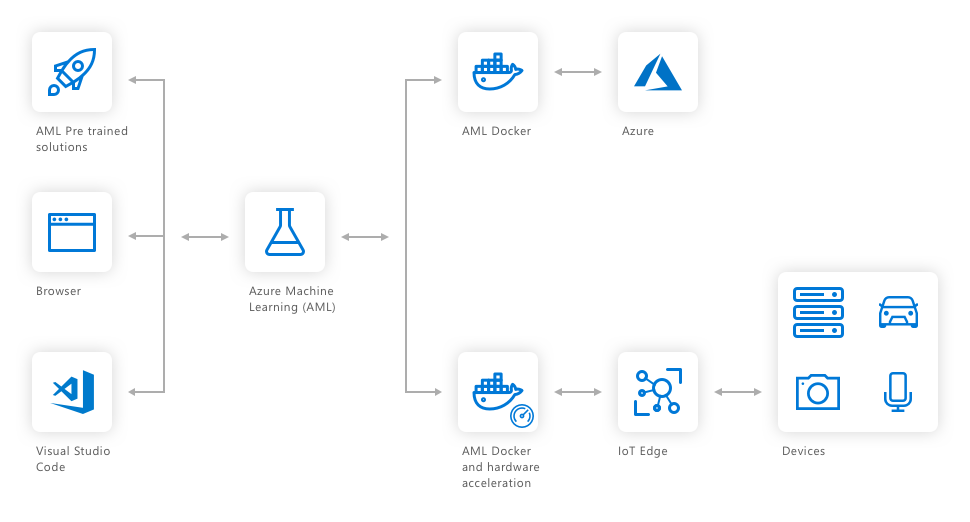

Qualcomm and Microsoft collaborated and played on their strengths (for Qualcomm – hardware expertise mainly SOC and power efficient chips; for Microsoft – software stack) to develop Vision AI Developer kit. It uses Azure Machine Learning to develop the models for the end-user application and Azure IoT Edge to deploy the models from the cloud to the kit. It allows the user to further access other Azure services like Azure Stream Analytics and Azure Cognitive Services and run them locally on the edge.

The Qualcomm Vision platform has an artificial intelligence based engine that has the heterogeneous architecture to perform advance computing, while being energy efficient with the support of advanced deep learning frameworks like Tensorflow, Caffe, and ONNX.

Qualcomm Neural Processing Engine in conjunction with Microsoft Azure helps in optimizing the AI Models, which further reduces the lag and helps in improving the efficiency for end-user applications. In addition to an AI Vision Kit, it has power-efficient devices with Qualcomm Quick Charge 4 technology for fast charging and security provided by Qualcomm processor security.

This partnership has enabled developers to deploy Azure AI solutions by selecting from pre-trained model and customize it as per their needs. Training of the model can be done with a simple click interface.

Impact of AI on different industry sectors

Following are some of the areas where AI Vision Kit can be the game changer with its capabilities:

Retail

The Vision Intelligence platform has features such as 4K Ultra HD video capture at 60 FPS, and 5.7K video capture at 30 FPS. The cameras placed in different shelves can monitor the movements of people for route optimizations and generate valuable analytics like the amount of time spent at the particular product, the emotion of the person with respect to the product, and can also make recommendations to the customer based on the selection of the product.

Home Automation

Vision Intelligence platform has 16 MP dual camera or up to 32 MP single camera that can capture the image of the visitor at the door and with the help of deep learning techniques can validate and permit his/her entrance. With the help of Azure ML, users can customize the home automation system as per their requirements.

Industrial

AI computer-aided vision platform can also spot manufacturing defects with almost no lag time and facilitate removal of the suspected part from the supply chain without any human interference.

Traffic Management

Vision Platform can also enable traffic cameras to identify vehicles instantly through optical character recognition of license plates. This helps in maintaining the flow of traffic and to reduce congestion on the roads.

Navigation

Autonomous robots with GPS and video capabilities can navigate easily in different terrains and perform critical tasks that are difficult for humans to perform.

Surveillance

Drones equipped with Vision Intelligence Platform capabilities can leverage the features of audio and video and detect anomalies in sensitive areas. This reduces the risk to human life on the borders and enables quick deployment of action forces to combat.

Maintaining a Cloud-Edge Balance

Data centers and the cloud will continue to be the backbone of the IoT System. They have the advantage of storing more data than an IoT device, learn more from its data, help train and develop complex machine learning models. Machine learning at the edge will continue to be the first choice where immediate response is of prime importance and reduces risk of privacy, data compliance, and security concerns.

AI at the cloud can process the edge insights to extract lessons from larger data sets. Over a longer period of time, these lessons can help organizations plan out their operations in an effective manner.

AI at the edge can improve our safety, flag a defect, and also respond quickly to anomalies and patterns that might take humans much longer to comprehend. It will allow us to access the untapped power of the devices, unleash the potential impact of AI, and establish its usage in true sense. To learn more about how your business can build and benefit from AI capabilities at the edge, get in touch with us.