Speech and Audio processing both deal with audible data, although the range of frequencies that the Speech processing caters to is from 20 Hz to 4 kHz, whereas the range of frequencies that the Audio processing caters to is from 20 Hz to 20 kHz. There’s one major difference between Speech and Audio processing: the Speech Compression mechanism is based on Human Vocal Tract, whereas the Audio Compression mechanism is based on Human Ear System.

Speech processing is a subset of Digital Signal Processing. Certain properties of the human vocal tract are used along with some mathematical techniques to achieve compression of speech signals for streaming the data over VoIP and Cellular networks.

Speech Processing is broadly categorized into:

- Speech Coding: Compressing speech to reduce the size of data by removing redundancies in the data for storing and streaming purposes.

- Speech Recognition: Ability of the algorithm to identify spoken words to convert them into text.

- Speaker Verification/Identification: For security applications in the banking sectors to ascertain the identity of the speaker.

- Speech Enhancement: For removing noise and increasing gain to make a recorded speech more audible.

- Speech Synthesis: Artificial generation of human speech for text to speech conversion.

Anatomy of the Human Vocal Tract from the Speech Processing Perspective

The human ear is most sensitive to energy signals between 50 Hz to 4 KHz. Speech signals comprise of sequence of sounds. When the air is forced out of the lungs, the acoustical excitation of the vocal tract generates the sound/speech signals. Lungs act as the air supply equipment during speech production. The vocal cords (as seen in the figure below) are actually two membranes that vary the area of the glottis. When we breathe, the vocal cords remain open but when we speak, they open and close.

When the air is forced out of the lungs, air pressure builds up near the vocal cords. Once the air pressure reaches a certain threshold, the vocal cords/folds open up and the flow of the air through them causes the membranes to vibrate. The frequency of vibration of the vocal cords depends on the length of the vocal cords and the tension in the cords. This frequency is called as the fundamental frequency or pitch frequency and it defines the pitch of the humans. The fundamental frequency for humans is statistically found to be in the following range:

- 50 Hz to 200 Hz for Men

- 150 Hz to 300 Hz for Women and

- 200 Hz to 400 Hz for Children

The vocal cords in women and children tend to be shorter and hence they speak at higher frequencies than men do.

Human speech can be broadly categorized into three types of sounds:

- Voiced Sounds: The sounds produced by vibration of vocal cords when the air flows from the lungs through the vocal tract e.g. a, b, m, n etc. The voiced sounds carry low frequency components. During voiced speech production, the vocal cords are closed for most of the time.

- Unvoiced Sounds: The vocal cords do not vibrate for unvoiced sounds. The continuous flow of the air through the vocal tract causes the unvoiced sounds e.g. shh, sss, f, etc. The unvoiced sounds carry high frequency components. During unvoiced speech production, the vocal cords are open for most of the time.

- Other Sounds: These sounds can be categorized as :

- Nasal Sounds: Vocal Tract coupled acoustically with Nasal Tract, i.e. sounds radiated through nostrils and lips e.g. m, n, ing etc.

- Plosive sounds: These sounds are a result of a build-up and sudden release of pressure near the closure in the front of the vocal tract e.g. p, t, b etc.

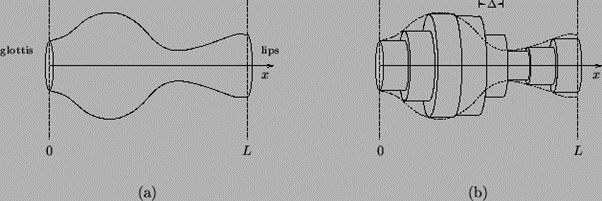

The vocal tract is a vase shaped acoustic tube that ceases at one end by the vocal cords and by the lips at the other end.

Cross sectional area of the vocal tract changes based upon the sounds that we intend to produce. The formant frequency can be defined as the frequency around which there is a high concentration of energy. Statistically, it has been observed that for every kHz there is approximately one formant frequency. Hence, we can observe a total of 3-4 formant frequencies in a human voice frequency range of 4 KHz.

Since the bandwidth for human speech is from 0 to 4 KHz, we sample the speech signals at 8 KHz based on the Nyquist criteria to avoid aliasing.

Speech Production Model

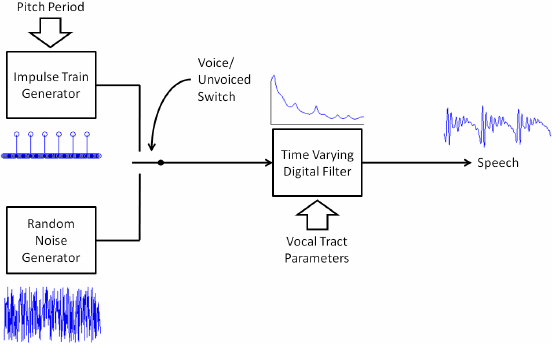

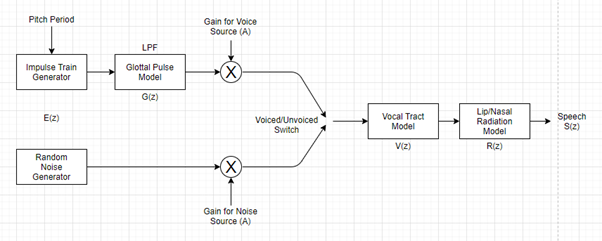

Depending on the content of the speech signal (voiced or unvoiced) the speech signal comprises of a series of pulses (for voiced sounds) or random noise (for unvoiced sounds). This spectrum of signals moves through the vocal tract. The vocal tract behaves as a spectral shaping filter i.e. the frequency response of the vocal tract is thrust upon the incoming speech signal. The shape and size of the vocal tract defines the frequency response and hence the difference in the voices of people.

Development of an accurate speech producing model requires one to develop a speech filter based model of the human speech producing mechanism. It is presumed that the source of excitation and the vocal tract are independent of each other. Therefore, they both are modeled separately. For modelling the vocal tract it is assumed that the vocal tract has defined characteristics over a 10 ms period of time. Thus once every 10 ms, the vocal tract configuration changes, bringing about, new vocal tract parameters (i.e. resonant/formant frequencies)

To build up an accurate model for speech production, it is essential to build a speech filter based model. The model must precisely represent the following:

- The excitation technique of the human speech production mechanism.

- The lip-nasal voice process.

- The operational intricacies of the vocal tract.

- Voiced speech and

- Unvoiced speech.

S(z) = E(z) * G(z) * A*V(z) * R(z)

Where:

S(z) => Speech at the Output of the Model

E(z) => Excitation Model

G(z) => Glottal Model

A => Gain Factor

V(z) => Vocal Tract Model

R(z) => Radiation Model

Excitation Model: The output of the excitation function of the model will vary depending on the trait of the speech produced.

- During the course of the voiced speech, the excitation will consist of a series of impulses, each spaced at an interval of the pitch period.

- During the course of unvoiced speech, the excitation will be a white noise/random noise type signal.

Glottal Model: The glottal model is used exclusively for the Voiced Speech component of the human speech. The glottal flow distinguishes the speakers in speech recognition and speech synthesis mechanisms.

Gain Factor: The energy of the sound is dependent on the gain factor. Generally, the energy for the voiced speech is many times greater than that of the unvoiced speech.

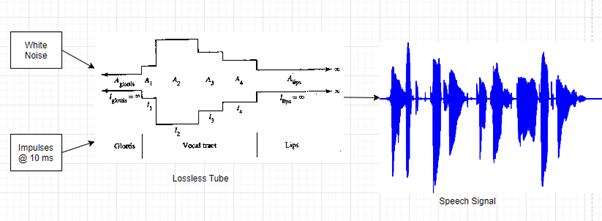

Vocal Tract Model: A chain of lossless tubes (short and cylindrical in shape) form the basis/model of the vocal tract (as shown in Figure 4 below), each with its own resonant frequency. The design of the lossless tube is different for different people. The resonant frequency depends on the shape of the tube, and hence, the difference in voices for different people.

The vocal tract model described above is typically used in the low bit-rate speech codecs, speech recognition systems, speaker authentication/identification systems, and speech synthesizers as well. It is essential to derive the coefficients of the vocal tract model for every frame of speech. The typical technique used for deriving the coefficients of the vocal tract model in speech codecs is Linear Predictive Coding (LPC). LPC vocoders can achieve a bit-rate of 1.2 to 4.8 kbps and hence, is categorized into a low quality, moderate complexity, and a low bit-rate algorithm.

Using LPC, we can derive the current speech sample values from the past speech samples.

In the time domain the equation for speech can be roughly represented as follows:

Current Sample of Speech = [(Coefficients X Past Sample of Speech) + Excitation modified by the Gain]

Summary

The properties of the speech signals are dependent on the human speech production system. The Speech Production Model has been derived from the fundamental principles of the human speech production system.

Hence, understanding the features of a human speech production system is essential to design the algorithms for speech compression, speech synthesis and speech recognition techniques. The Speech Production Model is used for conversion of analog speech into digital form to transmit it through Telephony Applications (Cellular telephones, wired telephones and VoIP streaming on the internet), text-to-speech conversion, speech coding for efficient use of bandwidth by compressing the speech signals to lower bit-rates to accommodate more users in the same bandwidth.

We at eInfochips provide software development services for DSP middleware i.e. Porting, Optimization, Support and Maintenance solutions involving Speech, Audio and Multimedia Codecs for various platforms.

eInfochips provides services like Integration, Testing, and validation of Multimedia codecs. We also cater to porting and optimizations for deep learning algorithms, algorithms for 3D Sound, Pre-processing and Post-processing of audio and video blocks. Implementation and parallelization of custom algorithms on multi-core platforms too are our forte. For more information on audio and speech processing please contact us right away.